Scale factor in data science, engineering, and various technical disciplines is a crucial concept for optimizing performance and scalability. This principle, though seemingly simple, holds profound implications for ensuring systems operate efficiently under varying conditions. Whether scaling a machine learning model or managing the expansion of a software application, understanding and applying the correct scale factor can be the difference between operational success and failure.

Key insights box:

Key Insights

- The scale factor directly impacts the performance metrics of machine learning models.

- An improperly chosen scale factor can lead to model inefficiency and suboptimal performance.

- A practical approach is to leverage real-world data to fine-tune the scale factor for better outcomes.

Understanding the scale factor is fundamental for optimizing model performance. In machine learning, a scale factor can be defined as a multiplier that adjusts the input features or data to a standard range, typically between 0 and 1. By standardizing data inputs, models become more efficient and can achieve better accuracy. For example, consider a regression model that uses features with vastly different scales. Without a properly set scale factor, the model might overweight the higher-scale features, leading to biased predictions.

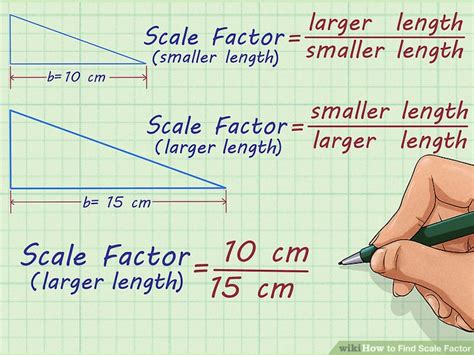

In technical terms, the scale factor can be mathematically represented as ( S = \frac{X - min(X)}{max(X) - min(X)} ), where (X) is the feature value. This formula ensures that all features are normalized, allowing the machine learning model to learn more effectively.

An effective application of the scale factor is seen in natural language processing (NLP) models. Text data often contains words with varying frequencies. By applying a scale factor to word counts or term frequency-inverse document frequency (tf-idf) scores, NLP models can achieve more balanced training data, leading to improved predictive performance. This is particularly important in domains such as sentiment analysis and spam detection, where input text features must be normalized for the model to generalize well.

When considering software scalability, the scale factor often refers to the rate at which a system can be expanded to accommodate increased load. In cloud computing, for instance, scaling can involve increasing the number of virtual machines (VMs) to handle more users or transactions. The scale factor here determines how efficiently resources are allocated.

To delve deeper, consider a microservices-based architecture where services are independently scalable. The scale factor determines how many instances of a service should be launched based on current demand. If this factor is set too high or too low, it can lead to either over-provisioning (costly) or under-provisioning (poor performance). Practical insight here is to utilize real-time data analytics to dynamically adjust the scale factor based on load metrics such as CPU usage, memory consumption, and network latency.

FAQ section:

What is the best way to determine an appropriate scale factor?

The best approach is to use a combination of domain knowledge and empirical testing. Begin with a standard normalization technique, and iteratively adjust based on model performance metrics such as accuracy, precision, and recall.

Can a scale factor be too high?

Yes, an excessively high scale factor can lead to diminishing returns where additional resources yield marginal gains. It's crucial to monitor performance metrics and adjust the scale factor dynamically to maintain optimal efficiency.

In conclusion, the concept of scale factor is pivotal in both machine learning and software scalability. Through careful consideration and practical application, one can significantly enhance the performance and efficiency of complex systems. Leveraging real-world data and performance metrics will ensure that the scale factor is both appropriate and effective, paving the way for successful outcomes in any technical endeavor.