Understanding the concept of degrees of freedom (DF) is crucial for anyone who delves into statistical analysis. Degrees of freedom represent the number of independent values in a dataset that can vary without violating any given constraints. This guide offers a clear, authoritative look at DF, providing practical insights, evidence-based statements, and real examples for mastering this essential statistical concept.

Introduction to Degrees of Freedom

Degrees of freedom are fundamental in statistical calculations, particularly in hypothesis testing, confidence intervals, and analysis of variance (ANOVA). The concept allows statisticians to determine the number of free pieces of information that go into the estimation of a parameter. To put it simply, if you have a dataset, the DF tells you how much data you can freely manipulate without breaking any rules established by the data’s constraints.

Key Insights

- DF is essential for determining the precision of an estimate.

- It influences the critical values used in statistical tests.

- A clear understanding of DF leads to better data interpretation.

The Basics of Degrees of Freedom

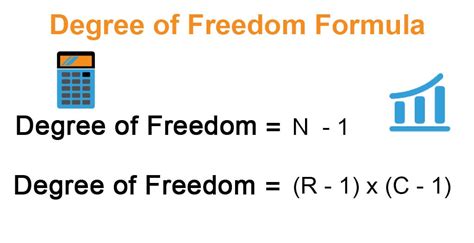

When collecting data, every value isn’t entirely free. You have certain constraints. For instance, in a set of observations where you have to find the mean, once the mean is determined, all other values are fixed relative to that mean. Hence, in a dataset of n values, n-1 values are free to vary. This is why, in most cases, degrees of freedom in a sample are calculated as n-1. A good example of this is the standard deviation formula where we divide by n-1 to get an unbiased estimate.

Applications in Hypothesis Testing

In hypothesis testing, degrees of freedom play a pivotal role. For example, in t-tests, the DF determines the critical values from the t-distribution table. For a simple t-test for a sample mean, DF = n-1. This calculation directly influences the p-value, affecting whether we reject or fail to reject the null hypothesis. A real-world application is evident in medical research where researchers often use t-tests to determine if a new drug’s effect is significantly different from a placebo.

Analysis of Variance (ANOVA)

ANOVA is a statistical method used to test differences between means from multiple groups. Here, degrees of freedom are divided into different components: between groups and within groups. The DF for the between-group variation is the number of groups minus one, while for the within-group variation, it’s the total number of observations minus the number of groups. For instance, in an ANOVA comparing the mean scores of three different teaching methods, DF for between groups is 2, and for within groups is n-3 if n is the total number of students across all groups. This breakdown allows researchers to determine if differences between groups are statistically significant.

What happens if I have missing data?

In cases with missing data, the DF for any statistical test will adjust based on the available data points. Essentially, the DF is calculated based on the number of observations that are not missing.

How does DF affect confidence intervals?

Degrees of freedom affect the width of confidence intervals. With fewer DF, the interval tends to be wider due to higher uncertainty. Conversely, more DF lead to narrower intervals, indicating more precise estimates.

Mastering the concept of degrees of freedom equips you with a valuable tool in statistical analysis, helping you make more informed decisions based on data. By understanding and applying DF correctly, you can enhance the reliability and accuracy of your statistical conclusions.